My k8s journey continues... Part III

Well, I worked as:

- Technical Consultant

- Developer

- Build Engineer

- Release Manager

- DevOps Engineer/Consultant

- System Integrator

- Scrum Master/Agile Coach

- Technical Project Lead

Introduction

True, my hate/love relationship with k8s is still going on, but I've to say, that all in all you can learn a lot, even if you don't use the know-how daily at work. For me, it gives me an understanding of what my developers and ops people talk about when they prepare new environments and deployments.

So deploying standard applications to a k8s cluster is usually a mostly painless experience if you understood how it works. And if you are willing to dig into the actual yaml files or the helm charts on github.com.

Basically, it is how to match the values.yaml file to the templates stored in the helm chart templates folder or if you use the k8s configuration files directly, where to enter what.

So I won't describe here how I installed my standard applications, like Node-Red, Grafana, Uptime-Kuma, CouchDB and other Standard applications you can run at home for your pleasure, needs or convenience.

Today, I want to describe how I figured out to deploy my own little very simple CMDB application to k8s. The CMDB app is a very simple adoption of Typicode's json-server, which can be found here.

My CMDB server which ran for years on Docker now has to move to k8s, since my Mac Mini 2007 has moved back into its original packaging and is no longer available for hosting it.

So let us start.

Preparation

The CMDB server ran on Docker with a compose file. Here it is:

version: '3.7'

volumes:

cmdbfs:

driver: local

driver_opts:

o: bind

type: none

device: /data/cmdb

networks:

default:

name: dhcp

external: true

services:

cmdb:

build: .

container_name: cmdb

image: 'arjsnet/jsondb/cmdb:latest'

hostname: cmdb.arjs.net

ports:

- 80:80

volumes:

- cmdbfs:/usr/database

restart: always

networks:

default:

ipv4_address: 192.168.1.192

dns:

- 192.168.1.234

- 192.168.1.1

dns_search:

- fritz.box

To easily transform this docker-compose file to a helm chart, there is a neat little tool, called "kompose" which can be found here.

After installing it, follow the "User Guide" to convert your docker-compose.yaml to a basic helm chart.

Tip: Rename your docker-compose.yaml first to the desired chart name. So in my case, I renamed it to cmdb.yaml, so the result was generated in a folder named "cmdb". Or use the option "--chart-name cmdb".

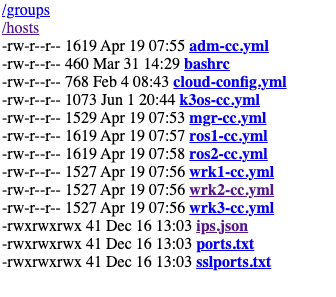

In the newly created folder, you find the following files:

Chart.yaml

README.md

templates folder, with cmdb-deployment.yaml, cmdb-service.yaml and cmdbfs-persistentvolumeclaim.yaml

Of course, if you try to deploy this basic helm chart, you will fail. Some files were missing.

I had to add a logo file "cmdb.png" (simply a generic logo created by a tool), empty "values.yaml" file - I know kinda lazy of me, to change the values directly in the templates files - and a "cmdb-ingress.yaml" file, so I could have a nice domain name to access it.

The domain name with a static load balancer IP was added already in Pi-Hole DNS, so there was no delay testing it.

1st Deployment

Of course, it went wrong. Spelling errors, indention errors and wrong syntax. So make sure you use the checking tool of your choosing to avoid those.

After that the real troubleshooting process.

Problems I encountered following the 1st deployment:

Settings in the docker-compose.yaml file that didn't translate to a helm chart

How to add an insecure registry to Kubernetes

Tag problems of the actual container to deploy

Push not working (spoiler: Of course a DNS problem)

The pull not working (another spoiler: DNS, again)

Again, build problems

Setting fixes

The first conversion to a Chart was painless, cause kompose can handle docker-compose.yaml files very well. But what I recognized after the first deployment, some settings in the original docker-compose file were not needed so I fixed them:

Remove network settings:

networks: default: name: dhcp external: true ... networks: default: ipv4_address: 192.168.1.192DNS removal

dns: - 192.168.1.234 - 192.168.1.1 dns_search: - fritz.box

Insecure registry fixes

"k3s" uses by default the docker images for the initial container image download. So usually you would upload your image to docker hub and k3s would load it from there.

I decided against it. I want to avoid putting stuff on docker hub which is just for my purposes. So I looked for a way to add my own image registry to the k3s cluster.

Some googling brought me to the blog of Luis Enrique Limon - "Soo broken" with an article that explains exactly that. Link here.

Additionally, I consulted the official documentation of "k3s". Link here.

So I created the initial "registries.yaml" with this content:

mirrors:

"registry.fritz.box":

endpoint:

- "http://registry.fritz.box"

configs:

tls:

insecure_skip_verify: true

The "tls" option I found is needed, if you, like me, have no SSL certificates in place.

This file needs to be distributed to all k3s servers and agents. So they can download the container image.

Tag fixes

The experienced and observant reader would have noticed that I have used a big "NO NO" in my docker-compose file. Yes, it ist the "latest" tag at the container image. The best practice is to use an explicit version tag. Consequently, I had to tag my image.

docker tag 0e5574283393 arjsnet/jsondb/cmdb:v1.0.0

Since I've not used a tag before, besides the "latest", which was given by docker by default, I had now two images listed in the local registry information.

So another fix was needed, this time in the docker-compose file and also in the generated Chart file cmdb-deployment.yaml:

# docker-compose

image: 'arjsnet/jsondb/cmdb:v1.0.0'

# cmdb-deployment.yaml

image: registry.fritz.box/arjsnet/jsondb/cmdb:v1.0.0

Push and pull fixes

Of course, if you don't push the image to the remote registry, it doesn't work. I found it out the hard way because I got errors when I installed the helm chart to my k3s cluster. I forgot to push the local image

docker push registry.fritz.box/arjsnet/jsondb/cmdb:v1.0.0

With that fix, I could finally pull the image on the "k3s" container image, when I used the helm command to install my little cmdb:

helm upgrade --install cmdb cmdb --values cmdb/values.yaml

The final build problem fixed

Again, the experienced and observant reader would have noticed another problem, I found out at last. As I wrote in earlier blogs, my former docker host was an Intel Mac Mini from 2007 with a Ubuntu distribution. So my cmdb image was built on an Intel machine. This image was tagged and pushed to my registry, which now ran on an Arm-based Raspberry Pi.

No problem here, but the container was built as an Intel machine, but my k3s cluster is running on arm-based Raspberry Pi's. And of course, that produced errors on the k3s cluster.

So I had to find a way to build the image for my cmdb on an arm processor. After weighing all options, I decided to take out one k3s agent and convert it to an old-fashioned docker host.

It was not only the build system for arm alone. After using my k3s cluster for a while I noticed that the Raspberry Pi Zero 2W was not powerful enough.

I spare you the steps what to do to downgrade k3s (gladly there is a comfortable uninstall "/usr/local/bin/k3s-uninstall.sh" available) and install and prepare docker.

After that extra work, I was finally able to build my Dockerfile on an arm-based processor.

FROM node:current-alpine

WORKDIR /usr/database

RUN npm install -g json-server

HEALTHCHECK --interval=120s --timeout=15s --start-period=30s CMD wget -S --spider http://localhost/

EXPOSE 80

ENTRYPOINT ["json-server"]

CMD ["--host", "0.0.0.0", "--port", "80", "--watch", "db.json"]

Well, the rest is business as usual: Build, Tag, Push, Install

And here is the result, my cmdb server, based on json-server running in my k3s cluster:

Conclusion

All in all, I learned a lot in this move from my docker-based setup to a k3s cluster-based setup.

Yes, there were pitfalls and a lot of frustration, a lot of researching the internet, reading a lot, understanding concepts and of course a good amount of trial and error.

But as Joda said once: "Failure a great teacher he is", my way was plastered with failures and I learned a lot in that way.

As always apply this rule: "Questions, feel free to ask. If you have ideas or find errors, mistakes, problems or other things which bother or enjoy you, use your common sense and be a self-reliant human being."

Have a good one. Alex