My love hate relationship with k8s continues

Well, I worked as:

- Technical Consultant

- Developer

- Build Engineer

- Release Manager

- DevOps Engineer/Consultant

- System Integrator

- Scrum Master/Agile Coach

- Technical Project Lead

Yup, another chapter in my love hate relationship is topic of this blog post.

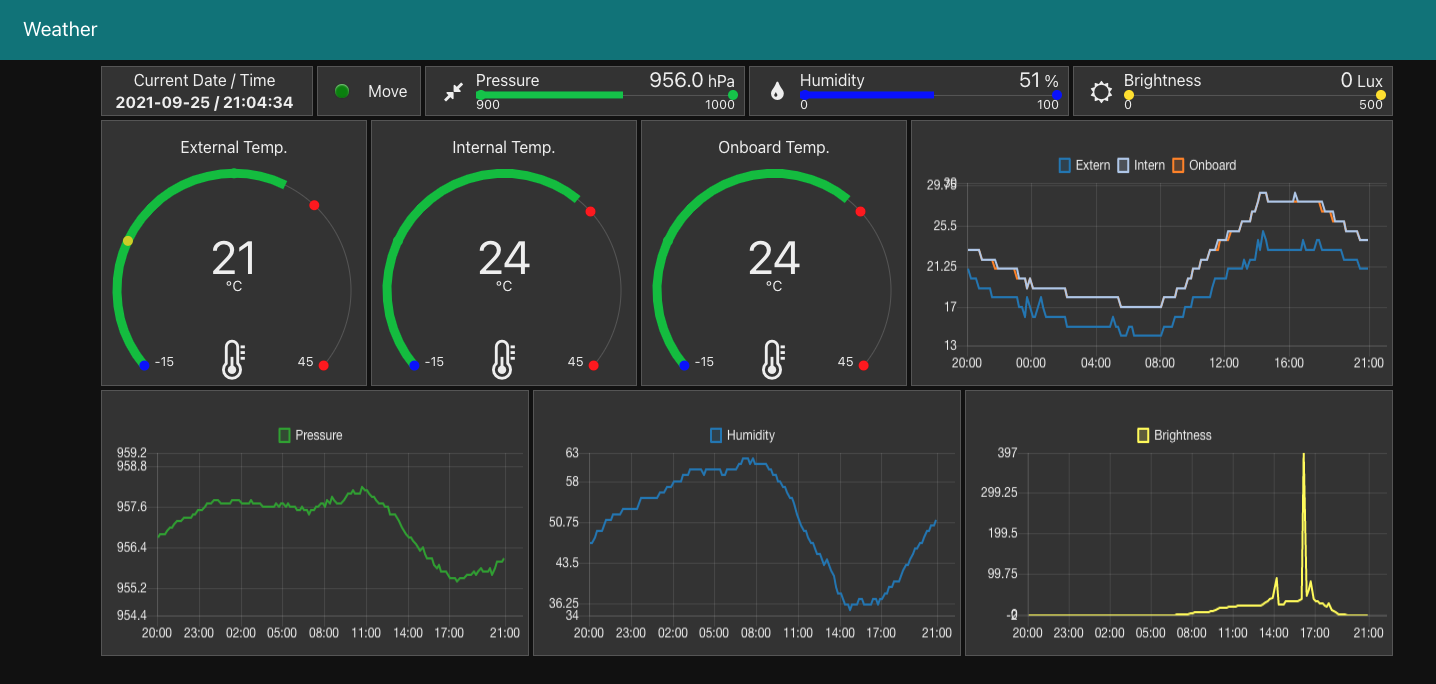

So, a few days ago, exactly on the morning of my birthday, I wanted to check the temperature at my node-red driven Weather panel. But, "page cannot be found"! So, naturally I thought "WTF, come on it is my birthday"! Never the less I made coffee and checked what's going on.

Soon I found out, k3s on k3os - yes, I run k3s on k3os, which itself is an takeover installation of ubuntu, which is the only way I got k3os on my Raspi 4B+, cause I couldn't get k3os to boot on the Raspi. Anyway back to topic.

Ok, so naturally I tried to use portainer, since it is my lightweight choice for k8s management. It was not working, so I ssh'ed into my k3os-server and did the natural thing "kubectl get pods -A". So k3s was running, but most of the pods which I needed, node-red, portainer, elk where not running, they where pending. It looked like this:

k3os-server [~]$ kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

elk kibana-kibana-54c46c54d6-nx9nr 0/1 Pending 0 13h

elk logstash-logstash-0 0/1 Pending 0 13h

portainer portainer-agent-5988b5d966-5cgkz 0/1 Pending 0 3h45m

elk elasticsearch-master-0 0/1 Running 3 13h

portainer svclb-portainer-agent-9xssw 1/1 Running 2 3h43m

elk svclb-logstash-logstash-47ksf 1/1 Running 3 13h

elk svclb-kibana-kibana-8wp5z 1/1 Running 3 13h

node-red node-red-6678875c4c-cxgpk 0/1 Running 3 3h45m

kube-system svclb-traefik-lqcr7 2/2 Running 4 3h51m

k3os-system system-upgrade-controller-8bf4f84c4-dwmnv 1/1 Running 14 78d

k3os-system apply-k3os-latest-on-k3os-server-with-de0d67248001a3a3b02-8bppd 0/1 Init:1/2 0 9m9s

kube-system metrics-server-7566d596c8-rpx6w 0/1 Pending 0 107s

kube-system traefik-56f7bf7775-wsjb6 0/1 Pending 0 74s

kube-system local-path-provisioner-64d457c485-pv9w4 0/1 Pending 0 61s

kube-system coredns-5d69dc75db-7jln6 0/1 Pending 0 47s

system-upgrade system-upgrade-controller-85b58fbc5f-xft58 0/1 Pending 0 3s

So I tried with "kubectl describe pod/portainer-agent-5988b5d966-5cgkz -n portainer" to figure out, why portainer was not working. This was the output:

k3os-server [~]$ kubectl describe pod/portainer-agent-5988b5d966-5cgkz -n portainer

Name: portainer-agent-5988b5d966-5cgkz

Namespace: portainer

Priority: 0

Node: <none>

Labels: app=portainer-agent

pod-template-hash=5988b5d966

Annotations: <none>

Status: Pending

IP:

IPs: <none>

Controlled By: ReplicaSet/portainer-agent-5988b5d966

Containers:

portainer-agent:

Image: portainer/agent:latest

Port: 9001/TCP

Host Port: 0/TCP

Environment:

LOG_LEVEL: DEBUG

AGENT_CLUSTER_ADDR: portainer-agent-headless

KUBERNETES_POD_IP: (v1:status.podIP)

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from portainer-sa-clusteradmin-token-zdg7s (ro)

Conditions:

Type Status

PodScheduled False

Volumes:

portainer-sa-clusteradmin-token-zdg7s:

Type: Secret (a volume populated by a Secret)

SecretName: portainer-sa-clusteradmin-token-zdg7s

Optional: false

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedScheduling 92m default-scheduler 0/1 nodes are available: 1 node(s) were unschedulable.

Ok, there was a glue: "unschedulable". Aha! So I did the next logical thing, at least for me, I entered "kubectl get node" and the output was:

k3os-server [~]$ kubectl get node

NAME STATUS ROLES AGE VERSION

k3os-server Ready,SchedulingDisabled control-plane,master 233d v1.19.15+k3s1

Aha! "Ready,SchedulingDisabled". So I was confused why was scheduling disabled. So naturally I checked k3os on github. And of course a new version was released. So I immediately suspected the Auto Upgrade Feature. So I mad sure, auto upgrade was configured right, rebooted and waited 30min, while I was finally getting my breakfast. But when I checked again, no success, still same output.

Well, so I did another thing most of you have done before. Stackoverflow to the rescue. There I found this little line:

k3os-server [~]$ kubectl patch node k3os-server -p "{\"spec\":{\"unschedulable\":false}}"

So I made sure, that my server was tainted "unschedulable"

k3os-server [~]$ kubectl describe node

Name: k3os-server

Roles: control-plane,master

Labels: beta.kubernetes.io/arch=arm64

beta.kubernetes.io/instance-type=k3s

beta.kubernetes.io/os=linux

k3os.io/mode=local

k3os.io/upgrade=enabled

k3os.io/version=v0.19.15-k3s1r0

k3s-upgrade=true

k3s.io/hostname=k3os-server

k3s.io/internal-ip=192.168.0.7

kubernetes.io/arch=arm64

kubernetes.io/hostname=k3os-server

kubernetes.io/os=linux

node-role.kubernetes.io/control-plane=true

node-role.kubernetes.io/master=true

node.kubernetes.io/instance-type=k3s

plan.upgrade.cattle.io/k3os-latest=ac5fabe1f913dcad262a3c711eba31016d3297ac24d3db12f8daaa8d

Annotations: flannel.alpha.coreos.com/backend-data: {"VtepMAC":"4a:a8:ea:c2:69:6c"}

flannel.alpha.coreos.com/backend-type: vxlan

flannel.alpha.coreos.com/kube-subnet-manager: true

flannel.alpha.coreos.com/public-ip: 192.168.0.7

k3s.io/node-args:

["server","--node-label","k3os.io/upgrade=enabled","--node-label","k3os.io/mode=local","--node-label","k3os.io/version=v0.19.15-k3s1r0"]

k3s.io/node-config-hash: UVUEL5HG5BTFEVR6MMGXJKHXJDX6XUREL7WJWDOM3IRLYCPS7JAQ====

k3s.io/node-env:

{"K3S_CLUSTER_SECRET":"********","K3S_DATA_DIR":"/var/lib/rancher/k3s/data/dcd31a7b8a5c2021f385fc354f38fe7b3fb758660905ed62a0faf7307e701fe...

node.alpha.kubernetes.io/ttl: 0

volumes.kubernetes.io/controller-managed-attach-detach: true

CreationTimestamp: Tue, 02 Feb 2021 12:23:32 +0000

Taints: node.kubernetes.io/unschedulable:NoSchedule

Unschedulable: true

Lease:

HolderIdentity: k3os-server

AcquireTime: <unset>

RenewTime: Thu, 23 Sep 2021 10:23:30 +0000

Conditions:

Type Status LastHeartbeatTime LastTransitionTime Reason Message

---- ------ ----------------- ------------------ ------ -------

NetworkUnavailable False Thu, 23 Sep 2021 08:48:26 +0000 Thu, 23 Sep 2021 08:48:26 +0000 FlannelIsUp Flannel is running on this node

MemoryPressure False Thu, 23 Sep 2021 10:18:47 +0000 Tue, 02 Feb 2021 12:23:32 +0000 KubeletHasSufficientMemory kubelet has sufficient memory available

DiskPressure False Thu, 23 Sep 2021 10:18:47 +0000 Tue, 02 Feb 2021 12:23:32 +0000 KubeletHasNoDiskPressure kubelet has no disk pressure

PIDPressure False Thu, 23 Sep 2021 10:18:47 +0000 Tue, 02 Feb 2021 12:23:32 +0000 KubeletHasSufficientPID kubelet has sufficient PID available

Ready True Thu, 23 Sep 2021 10:18:47 +0000 Thu, 23 Sep 2021 08:48:41 +0000 KubeletReady kubelet is posting ready status

Addresses:

InternalIP: 192.168.0.7

Hostname: k3os-server

Capacity:

cpu: 4

ephemeral-storage: 153593272Ki

memory: 7997752Ki

pods: 110

Allocatable:

cpu: 4

ephemeral-storage: 149415534885

memory: 7997752Ki

pods: 110

System Info:

Machine ID: 47db285f8c257cdd3191ef3f614ce3ab

System UUID: 47db285f8c257cdd3191ef3f614ce3ab

Boot ID: 259c932f-16d4-4674-8232-6b98edc4096c

Kernel Version: 5.4.0-1028-raspi

OS Image: k3OS v0.19.15-k3s1r0

Operating System: linux

Architecture: arm64

Container Runtime Version: containerd://1.4.9-k3s1

Kubelet Version: v1.19.15+k3s1

Kube-Proxy Version: v1.19.15+k3s1

PodCIDR: 10.42.0.0/24

PodCIDRs: 10.42.0.0/24

ProviderID: k3s://k3os-server

Non-terminated Pods: (7 in total)

Namespace Name CPU Requests CPU Limits Memory Requests Memory Limits AGE

--------- ---- ------------ ---------- --------------- ------------- ---

elk elasticsearch-master-0 1 (25%) 1 (25%) 2Gi (26%) 2Gi (26%) 13h

portainer svclb-portainer-agent-9xssw 0 (0%) 0 (0%) 0 (0%) 0 (0%) 3h46m

elk svclb-logstash-logstash-47ksf 0 (0%) 0 (0%) 0 (0%) 0 (0%) 13h

elk svclb-kibana-kibana-8wp5z 0 (0%) 0 (0%) 0 (0%) 0 (0%) 13h

kube-system svclb-traefik-lqcr7 0 (0%) 0 (0%) 0 (0%) 0 (0%) 3h54m

k3os-system system-upgrade-controller-8bf4f84c4-dwmnv 0 (0%) 0 (0%) 0 (0%) 0 (0%) 78d

k3os-system apply-k3os-latest-on-k3os-server-with-de0d67248001a3a3b02-8bppd 0 (0%) 0 (0%) 0 (0%) 0 (0%) 12m

Allocated resources:

(Total limits may be over 100 percent, i.e., overcommitted.)

Resource Requests Limits

-------- -------- ------

cpu 1 (25%) 1 (25%)

memory 2Gi (26%) 2Gi (26%)

ephemeral-storage 0 (0%) 0 (0%)

Events: <none>

And there it was "Taints: node.kubernetes.io/unschedulable:NoSchedule" Ok, I applied a fix I came up after reading an stackoverflow article and that was the result:

k3os-server [~]$ kubectl patch node k3os-server -p "{\"spec\":{\"unschedulable\":false}}"

node/k3os-server patched

k3os-server [~]$ kubectl get node

NAME STATUS ROLES AGE VERSION

k3os-server Ready control-plane,master 233d v1.19.15+k3s1

And of course a reboot. After some distractions, it was my birthday after all, I checked again, and the update was done and apps worked again:

k3os-server [~]$ kubectl get node

NAME STATUS ROLES AGE VERSION

k3os-server Ready control-plane,master 233d v1.20.11+k3s1

k3os-server [~]$ kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

elk elasticsearch-master-0 1/1 Running 0 44m

kube-system metrics-server-86cbb8457f-hnnr7 1/1 Running 1 42m

kube-system local-path-provisioner-5ff76fc89d-jqsqt 1/1 Running 2 42m

kube-system coredns-6488c6fcc6-v6zs2 1/1 Running 1 42m

k3os-system system-upgrade-controller-c6c9b4c5d-6l8wq 1/1 Running 2 42m

kube-system traefik-7b6b5b8795-6jxpn 1/1 Running 0 40m

elk svclb-kibana-kibana-9n7xg 1/1 Running 0 40m

elk svclb-logstash-logstash-gsmnb 1/1 Running 0 40m

portainer svclb-portainer-agent-rtq8g 1/1 Running 0 40m

kube-system svclb-traefik-rzz6w 2/2 Running 0 40m

portainer portainer-agent-5988b5d966-jdjvb 1/1 Running 4 48m

elk logstash-logstash-0 1/1 Running 1 44m

elk kibana-kibana-54c46c54d6-84g5k 1/1 Running 3 4m14s

node-red node-red-68fd7b8bdd-d9s88 1/1 Running 0 42m

To be honest I rather would have been spared by this "crisis", but it happened and I learned something from it. That's a good thing.And of course how valuable Stackoverflow is and it makes our live in I.T. so much easier.

But that brings me back to my love hate relationship. Why is it so hard to find the problems with k8s. I'm still not sure if what I did was ok, but it worked. That is what counts. But can I be sure it will not happen again, when the next update of k3os is released!? I don't know.

Of course it is k3s on a k3os, which is not the typical k8s environment, but I had a Pi and time and interest, so why not. And of course, the failure surely had something to do with me not liking how to configure k8s. And hence I surely did something wrong when I configured auto upgrade for k3os. For my defense I can only say it worked before, because at some point auto upgrade had to work otherwise it would have not upgraded to "v1.19.15+k3s1".

Anyhow, I don't think it is k3s or k3os which caused the problem, but at this point, I don't care. I simply want to see my temperature. :-)

As always apply this rule: "Questions, feel free to ask. If you have ideas or find errors, mistakes, problems or other things which bother or enjoy you, use your common sense and be a self-reliant human being."

Have a good one. Alex